Data sets are growing rapidly – also because they are increasingly captured through the information- sensitive Internet of Things (IoT). This includes mobile devices, household appliances, antennas (remote sensing), software protocols, cameras, microphones, RFID readers and wireless sensor networks. The technological per head storage capacity of the world has doubled every 40 months since the 1980s. This makes Big Data a key topic for politics, science and business.

What is Big Data?

The term has been used since the 1990s. Big Data are data sets, which are so extensive and complex, that conventional data processing software is no longer sufficient to process them. Big Data comprises unstructured, half structured and structured data, whereby the focus is on unstructured data. In general, Big Data constitutes those information assets, which are characterised by such a high volume, such high speed and variety, that they require specific technologies and analysis methodologies for their conversion into value or usable information. The data originates from various areas, for example:

- Internet

- Mobile communication

- Financial industry

- Health care

- Traffic data

- Energy sector

Big challenges include the gathering of data with specific solutions as well as their storage and analysis. Additional valuable competencies include search, use, transmission, visualisation, query and update and in particular, the protection of the data.

The analysis of data sets can find new connections, for example to identify business trends or examine consumer behaviour.

Origin of Big Data?

Collated data originates from various areas, such as:

- Transactional data, e.g. data from invoices, payment orders, warehouse and delivery notes

- Machine data,g. data from industrial plants or means of transport

- Real-time data of sensors (including sensors on the smart phone) and web logs, which track user behaviour online

- Social data, e.g. data, which originates from social media services, such as Facebook likes, tweets and YouTube views

- Data from mobile devices

- Financial market data, e.g. transactional and stock exchange data

- Internet of Things (IoT)

- Machine to Machine Communication (M2M)

- Multi-media data –biggest part of the dataflow in the World Wide Web

- Health care, e.g. gene analysis

- Science, e.g. climate research, genetics or geology

and much more.

In many cases the data itself is insignificant. Real business value often only arises through the combination of these Big Data feeds with conventional data such as customer data, sales location data and turnover figures to generate new findings, decisions and actions.

Examples for large data volume processing

International development: Studies regarding the effective use of information and communication technologies suggest that processed data deliver important findings, but can also present unique challenges for international development. Progress in the analysis of Big Data offers a cost-effective option to improve decision making in critical development areas such as health care provision, employment, economic productivity, crime, security as well as natural disasters and resource management.

Manufacturing: Here improvements in delivery planning and product quality are paramount. Big Data forms the basis for transparency in the manufacturing industry, which enables removal of uncertainties such as inconsistent component performance and availability. As an applicable approach for almost no downtimes and transparency, predictive manufacturing requires a large data volume and progressive forecasting tools for systematic processing of data into useful information. A conceptual framework of predictive manufacturing starts with data capture, whereby different types of sensory data, such as acoustics, vibration, pressure, electric, voltage and controller data can be collected.

Health care: Big Data analytics have contributed to improving health care by offering, amongst other things, personalised medicine and prescriptive analytics. The scope of generated data in health care systems is not trivial. The data volume will continue to increase with additional use of mHealth, eHealth and portable technologies. This includes for example electronic medical data, image data, patient data and sensory data. The need to focus on data and information quality in such environments has become even bigger.

Media: In order to understand how media uses big data volumes, it is first of all necessary to have a closer look at the media process. The sector appears to move away from the traditional approach to use certain media environments such as newspapers, magazines or TV programmes and instead addresses consumers with technologies that reach target groups at ideal times in ideal locations. The aim is to send a message or content, which (statistically speaking) is in agreement with the attitude of the consumer. For example, messages (advertisements) and content (articles) are increasingly tailored to consumers’ needs, which were gained exclusively through various data mining activities.

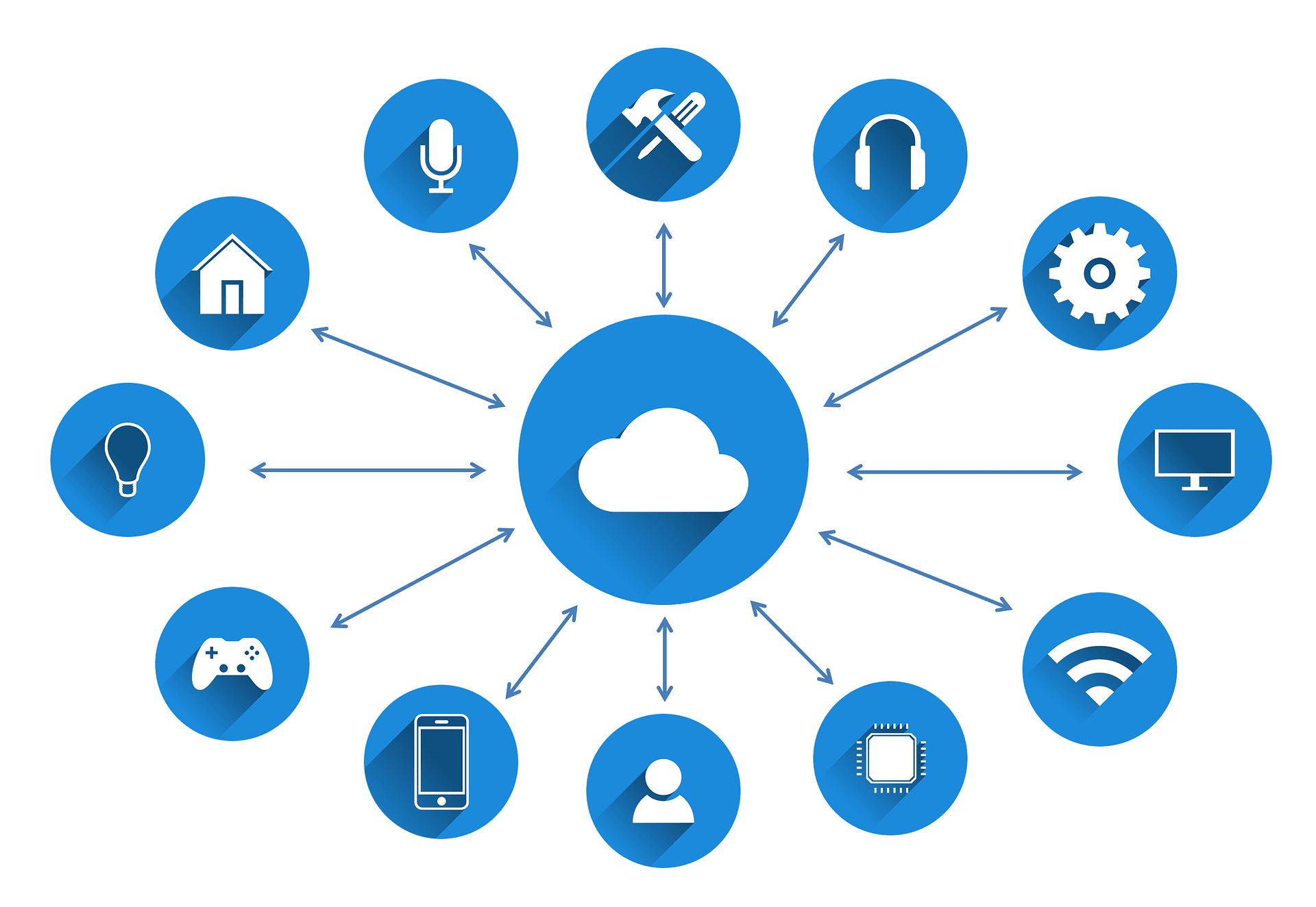

Internet of Things (IoT): The basic idea of the Internet of Things is to turn objects into smart objects and to gain data from them. This includes everyday items such as watches or fridges, but also vehicles or railway lines. These intelligent objects constantly generate new information, which are first evaluated with the help of a Big Data analysis and are then converted into knowledge. Due to the ever-increasing number of networked devices, more and more data is collected. The result is that evaluation of the data bases has become more difficult, however more relevant conclusions can be drawn from it.

Challenges of Big Data

The most obvious challenge connected with high data volumes is simple storage and analysis of this information. Documents, photos, audio, videos and other unstructured data can be hard to search and analyse. In order to cope with the data growth, companies rely on different technologies. Where storage is involved, then converged or hyperconverged infrastructure and software storage can facilitate scaling the software. Of course, companies not only want to store data, but they want to use them to reach their business objectives.

Big Data can help businesses to become more competitive – but only if they gain knowledge from it, which they can quickly implement. In order to reach this speed, some companies rely on a new generation of ETL and analysis tools, which drastically shorten the production time for the creation of reports. They invest in software with real-time analysis functions, which enable them to respond immediately to market developments. However, the companies need specialists with specific knowledge to develop, administer and operate the applications to generate these findings. This has pushed up demand for experts in this area. Companies have a number of options to address this shortage of talent. Firstly: many increase their budgets, recruitment and retention efforts. Secondly: they offer their current staff more training opportunities to develop talent themselves. Thirdly: Many companies count on technology. They purchase analysis solutions with self-service and/or machine learning capability. These tools were developed to be used in a simple way and to help companies reach their objectives even if they do not have many in-house specialists.

The heterogeneity of data sets leads to challenges with integration. Information originates from many different locations – company applications, social media streams, email systems, documents created by staff etc. Combining and reconciling all this data in a way that it can be used for report creation, can cause difficulties. Vendors offer many data integration tools, which are to simplify the process. However, a large number of companies complain that they have not yet solved the problem of data integration. Closely linked with the idea of data integration is data validation. Often organisations receive similar data from different systems which, however, do not always match. For example, the e-commerce system can show daily turnover on a certain level, while the ERP (Enterprise Resource Planning) system provides other information. The process to match these data sets and to ensure that they are correct, usable and secure, is described as data governance. Solving the challenges of data governance is very complex and generally requires a combination of guideline changes and technology.

Big Data and Cloud Computing

Cloud Computing makes it easier to offer the best technology in cost-effective packages. Cloud computing not only reduces costs, it also provides a number of applications to smaller companies. Just as the cloud grows constantly, an explosion of information on the internet develops. Social media is a completely different world, in which both marketers as well as normal users generate vast amount of data every day. Organisations and institutions also create data on a daily basis, which over time is difficult to administer. Together, cloud computing and large data volumes offer an ideal solution, which is both scalable as well as suitable for business analysis. The conventional infrastructure for data storage and management nowadays turns out to be slower and more difficult to administer. However, cloud computing provides a company with all the resources it requires. Prior to the cloud, companies invested huge sums in the development of IT departments and then had to incur further expenditures to keep hardware up-to-date. Now companies can host Big Data on external servers and only pay for storage space and processing power, which they actually use. (Pay-as-you-go).

Future and Outlook

Many companies will search for technologies, which will enable them to access data in real-time and to react. In the course of data analysis’ progress, some companies have started to invest in machine learning. Machine learning is a subset of artificial intelligence: through the recognition of patterns in available data bases, IT systems based on existing data bases and algorithms, are able to independently recognise and develop solutions for problems.

Image sources:

- © geralt | pixabay.com

- © geralt | pixabay.com

- © geralt | pixabay.com

- © Tumisu | pixabay.com

- © geralt | pixabay.com